We explain the factors that we must take into account to detect when to stop an A/B test and check if we can draw a conclusion from those data.

Why should I start an A/B test?

After a thorough conversion research, we have detected a conversion problem in our website. In a meeting with all the people involved, several alternatives arise to solve the problem. To confirm which is the best solution, we test the different hypotheses with an A/B test (/n …). But after several days, the question arises… Can we already confirm that one of the alternatives works better than the other ones?

When should I stop my A/B test?

It is important that we don’t stop a test too soon, because we could make a wrong decision with a false positive. On the other hand, it is also not convenient to run a test for too long. If we have conclusive and positive results, we can already apply them to our website. If we do not have them yet, we can try a different approach. Even if we don’t have a clear winning alternative, that doesn’t mean that we didn’t learn anything yet.

What factores should we take into account?

There are three essential requirements that must be met before deciding to stop a test:

1. Two business cycles. The test must be running during at least two business cycles, which can be two weeks, two months… Depending on the seasonality of your sector.

2 Statistical trust. The concept of statistical trust may be defined as the probability of being making an good decision. Therefore, our objective will be to maximize this statistical trust. The higher the level of statistical trust (95% is a good level), the more accurate the results of the test will be. In other words, the lower the statistical significance (5% is a good percentage), the more ceratin we can be it is the good decision. Normally, you can get this information with your testing tool .

3. Sample size. We can’t consider that the test is valid if we don’t have a minimum number of visits in each of the alternatives of our test. But, how many visits do we need? What is the minimum sample size?

How many visits are necessary to conclude a test?

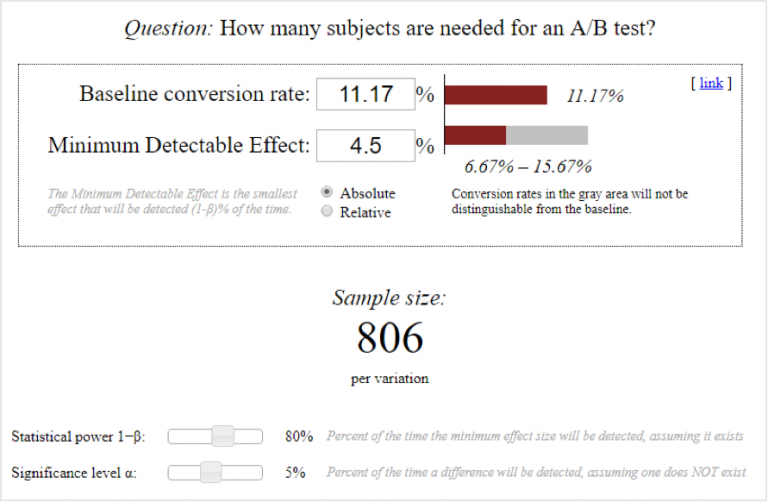

To make an easy calculation, you can use an online calculator for A/B tests. There you will need to enter three values and we will get the minimun sample size out of them. That is, we will obtain the exact number of visits we need in each of the alternatives so the test can be considered a conclusive one.

The three values that we must enter are the follwing:

- Original conversion ratio: The conversion percentage of the control group. That is, the original version without changes.

- Impact on the conversion: The minimum variation that we wish to obtain in the conversion (in absolute or relative terms).

- Statistical significance: The probability of rejecting our hypothesis when it is true. As we mentioned, the ideal level of statistical significance would be equal to or less than 5%.

A real case to illustrate when to stop an A/B test

In a product page, designed for a desktop use mainly, we have detected that in the mobile version the user had to scroll several times to reach the CTA to open a contact form. Since the objective of that page was to get the user’s contact, we thought we should give that CTA more visibility to increase the conversion rate.

But then a question arose: What will be more effective to increase the conversion? Will it work if we place the CTA in a higher position? Or would the original version work better? (This way the user has the opportunity to get information before arriving at the CTA.) We knew that we would answer the question with an A/B test, so we got down to work, and set up a test to check our hypothesis.

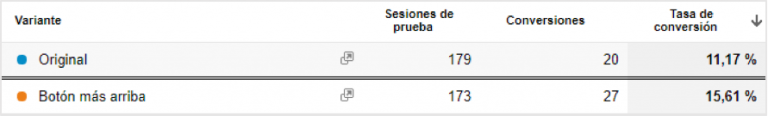

After several days running our test, we saw that the variant seemed to have a better conversion rate. Specifically, the version in which the contact CTA was shown above seemed to have an impact on the conversion of +4.5 percentage points.

Once the two business cycles had passed, and a statistical confidence of 95% had been reached, how many visits we would need to be able to conclude that the variant has an impact of +4.5 percentage points on the conversion? We entered the data in the calculator to estimate a minimum number of users:

We would need at least 806 visits in each of the variants of our test to be able to confirm (with 95% statistical confidence) that placing the CTA above would improve the conversion rate of the product page by +4.5 points. That is, we still have to wait a few more days to be able to make a decision based on conclusive data.

However, the impact on conversion will still vary after that time, so the minimum sample size will also vary. What if the impact on conversion was less than 4.5?

If the impact on conversion were +2.5 points, we would need 2,562 visits in each of the variants of our test to reach a conclusion. That is, the volume of visits needed multiplies as the impact on conversion decreases.

Bonus track: How can I minimize the minimum sample size?

If you have entered these data, and you have realized that you need way more traffic than you thought to conclude your test, you should know that there are two ways to minimize the minimum size of the sample:

• With a greater impact on the conversion: The more drastic the changes introduced in the A B test, the more likely it will impact the conversion. To contrast a large variation in the conversion, a smaller sample is needed; while to contrast the impact of a small change (one word of a whole text, for example) many more visits are necessary.

• With a higher statistical significance (lower statistical trust): If we want to be more precise, we will need more visits to test our hypothesis, while if we settle for a lower level of precision, we can draw conclusions with a lower volume of visits.

Conclusion

When trying an A/B test, we must take into account the volume of traffic that the site has, so we can have a conclusive result in a reasonable period of time.